Using Artificial Intelligence to Strengthen Cybersecurity Defenses

Learn how AI helps detect cyber threats, stop phishing attacks, and strengthen security with faster, smarter defenses. Understand how modern systems adapt to complex and emerging attacks.

Artificial intelligence is gaining prevalence in modern cybersecurity, driven by the growing complexity of threats that existing traditional security systems were not built to handle. Conventional, rules-based tools tend to struggle when encountering threats they have not seen before. Polymorphic malware that rewrites its own code, zero-day exploits, and AI-generated phishing emails are examples of attacks that can slip past signature-based defenses entirely.

In response, defensive artificial intelligence is increasingly being applied to strengthen these defenses in ways that static rule sets cannot. Machine learning models can sift through millions of network events, endpoint logs, and user activity records to surface anomalies such as a device communicating with an unusual external server or a user account accessing sensitive files outside of normal working hours. In traditional systems, this same process would rely on manually configured rules and thresholds, requiring security teams to anticipate every possible anomaly in advance. Analysts would need to review large volumes of raw log data by hand, making it both time-intensive and prone to missed detections, particularly when threats are subtle or unfamiliar. When a genuine threat is identified, artificial intelligence can automate containment actions such as isolating a compromised endpoint, revoking access credentials, or triggering an alert for human review, reducing the time between detection and response. This article explains how defensive artificial intelligence is being applied to counter specific, modern automated threats.

Stopping Automated Social Engineering and Deepfakes

Generative artificial intelligence makes it easy for attackers to create highly targeted phishing campaigns and synthetic media. Attackers use automated scripts to scrape publicly available information from social media profiles, then feed this data into language models to generate personalized, contextually relevant phishing emails that are difficult to distinguish from legitimate ones. The integration of deepfakes into phishing campaigns has led to a new era of fraud called 'Social Engineering 2.0'. Deep learning models can now create hyper realistic voice and video clones. Attackers mimic executives in real time to authorize fraudulent transactions. A notable example involved a finance worker in Hong Kong who transferred $25 million after being deceived by a video conference call where every other participant was a deepfake recreation of their colleagues [1]. This is supported by alarming statistics. Recent reports indicate that deepfake tool trading on the dark web has increased by 223% , and 82.6% of phishing emails now use AI generated content to bypass traditional signature based filters [2].

To stop these advanced scams, defensive artificial intelligence equipped with natural language processing and large language models analyzes the content and context of emails to identify signs of fraud. Rather than blocking based on keywords alone, these systems can establish a baseline of how a specific sender typically writes and flag deviations from that pattern, such as unusual urgency, domain spoofing, and behavioral anomalies in language and tone. To catch deepfakes, models are trained on datasets containing examples of inconsistent and manipulated audio and video, allowing them to learn the subtle patterns that distinguish synthetic media from genuine recordings. These models are trained to detect patterns such as irregular eye blinking rates, lip sync errors, irregular audio frequencies, lighting inconsistencies, and unnatural facial movements.

Catching Evasive and Polymorphic Malware

Existing endpoint security tools rely on signature based detection, meaning they check files against databases of known malicious hashes or strings. Attackers bypass this by using artificial intelligence to write polymorphic malware, which changes its code every time it runs. For example, the tool BlackMamba uses large language models to rewrite its code dynamically, while the threat group STORM 0817 builds malware that alters its structure to mimic normal system operations [3].

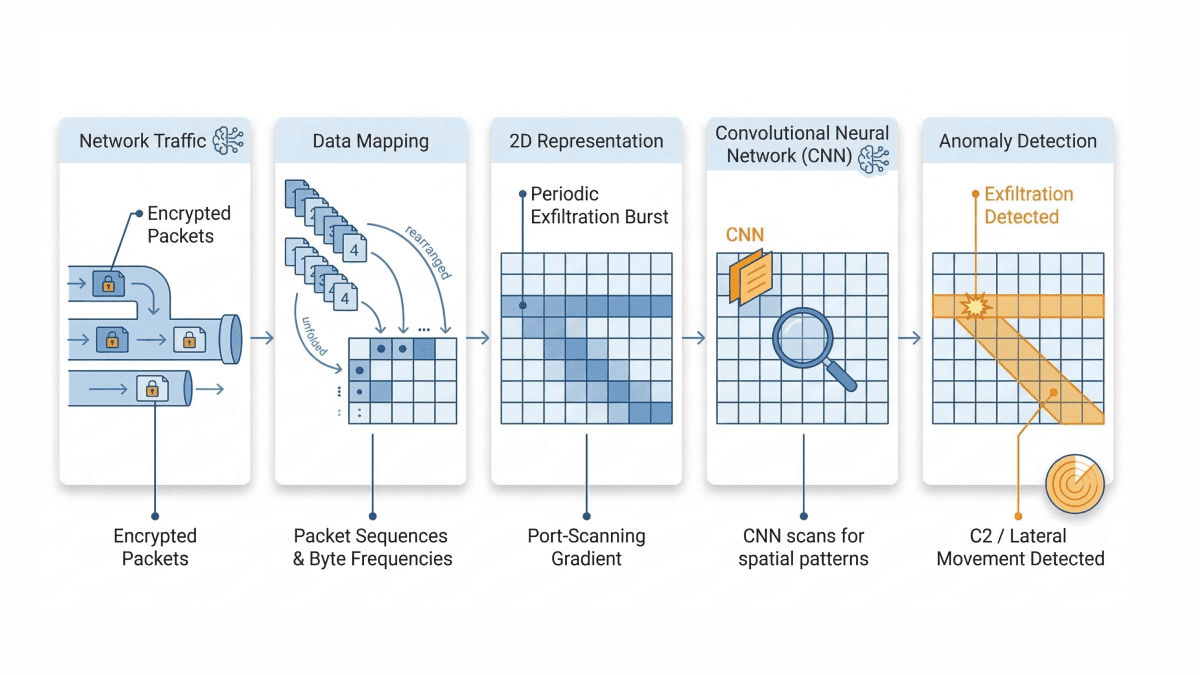

Defensive artificial intelligence addresses this problem by focusing on behavior instead of static code signatures. A signature is a static file hash or a sequence of bytes on disk, which only identifies threats that have been seen before. Behavior refers to what a file actually does when it executes in memory. Machine learning models learn the normal activity baseline for every device on the network, including patterns such as which processes typically run at startup, what files a user normally accesses, and what external connections a device regularly makes. If a new file executes and tries to modify registry keys or disable security policies, the behavioral analytics engine flags it as malicious regardless of what the code looks like. Deep learning is also applied in network detection systems, where packet sequences and byte frequencies are mapped into two-dimensional arrays, allowing convolutional neural networks to spot spatial patterns in the data. These models can detect anomalies such as periodic exfiltration bursts or port-scanning gradients that signal command-and-control communication or lateral movement, even when the payload itself is encrypted.

Managing Autonomous Agents and Identity Risks

Many businesses are adopting autonomous artificial intelligence agents to handle tasks like managing calendars and writing code. A recent example is OpenClaw, a popular open source agent framework that runs locally on a user's machine and requires elevated system permissions to execute tasks autonomously. Because these agents operate with high level permissions, they introduce serious identity security risks if not properly secured. Security researchers found tens of thousands of OpenClaw instances exposed online where default settings had been left unchanged, authentication had not been configured, and agent endpoints were left publicly accessible without access controls. A specific vulnerability, tracked as CVE-2026-25253, allowed attackers to run arbitrary code on a user's machine through a malicious web link [4]. Additionally, the skill marketplace for these agents contained hundreds of malicious packages designed to steal API keys and passwords.

To secure environments against compromised agents, Identity and Access Management systems enforce access controls, while artificial intelligence provides risk scoring to flag unusual or potentially unauthorized activity. Machine learning models run User and Entity Behavior Analytics, a process that establishes behavioral baselines for both human users and non-human identities such as artificial intelligence agents. For non-human identities, this works by mapping normal API call graphs and execution chains. If an agent like OpenClaw typically queries a calendar API but suddenly attempts a POST request to a customer database API, the model detects this structural deviation in the API call sequence and raises an alert. Zero trust principles are also applied by granting agents only temporary permissions and requiring human approval for high risk actions.

Defending Against Data Poisoning

Data poisoning is an attack in which malicious actors tamper with the training data used to build a machine learning model, introducing corrupted samples that cause the model to behave incorrectly under specific conditions. By injecting bad data into the system, an attacker creates a hidden backdoor that causes the model to fail under specific conditions. In health care, research shows that poisoning just 100 to 500 samples in a large dataset is enough to make an artificial intelligence model systematically misdiagnose certain patients [5]. In another example, digital artists use a tool called Nightshade to protect their work from unauthorized use in artificial intelligence training [6]. While Nightshade is used defensively by artists, it illustrates how pixel-level noise injected into images can function as poisoned training data. Nightshade works by adding carefully calculated pixel-level noise to an image, changes that are invisible to the human eye but are designed to push the image toward a different category in the feature space that a machine learning model uses to classify objects. When artificial intelligence companies scrape these images for training, the poisoned data causes their models to misclassify common objects.

To prevent data poisoning, artificial intelligence algorithms validate and clean training datasets before they are processed. Rather than simply scanning for duplicate entries, these tools apply techniques such as activation clustering, which works by examining the internal representations a model builds from its training data to identify whether any subset of samples is pushing the model toward a hidden, unintended behavior. During development, engineers apply methods such as data provenance cryptography, which cryptographically signs datasets to verify they have not been tampered with, and Differentially Private Stochastic Gradient Descent, which limits how much any individual training sample can influence the final model, reducing the impact of injected poisoned data. Ensemble monitoring systems such as the MEDLEY framework are also used. Unlike traditional ensemble approaches that force all models to agree on a single answer, MEDLEY keeps track of where individual models disagree with each other. This means that if one model has been affected by poisoned data and starts producing unexpected outputs, it does not get buried under a majority vote. Instead, that disagreement is flagged and made visible for human review before it can cause harm.

Speeding Up Security Operations

In late 2025, security researchers detected a campaign attributed to a Chinese state sponsored group that utilized an autonomous AI framework. The attackers reportedly used an AI tool, specifically manipulating models like Claude Code, to conduct reconnaissance, write exploit code, and exfiltrate data. The AI performed 80 to 90 percent of the campaign autonomously [7].

To close this gap, artificial intelligence is deployed directly into Security Operations Centers. AI-powered analyst systems connect with existing security tools to collect and correlate data from endpoints, cloud servers, and network logs into a unified timeline of events. When an alert triggers, the system investigates by automatically mapping the sequence of actions that preceded it, identifying which process spawned a suspicious file, which user account was involved, what network connections were made, and whether similar patterns appear in global threat intelligence feeds. This automated investigation filters out false alarms and surfaces a structured summary of the incident for human operators. If a severe attack is confirmed, the system can take immediate containment actions by calling on integrated security tools, for example instructing an endpoint detection platform to isolate a compromised device from the network, sending an API call to an identity management system to suspend a flagged account, or pushing a firewall rule to block a malicious IP address in real time [8]. By handling the investigative and containment steps that would otherwise require manual effort, artificial intelligence allows human experts to focus on strategic decisions and complex problem solving.

Conclusion

The cybersecurity landscape requires dynamic defense strategies. Attackers use machine learning to scale phishing campaigns, mutate malware, and exploit system weaknesses rapidly. In response, organizations rely on defensive artificial intelligence to monitor unusual behavior, control machine identities, secure training data, and accelerate incident response. Implementing these artificial intelligence driven security operations is the most practical way to secure digital networks against automated threats.

Rootcode helps governments and enterprises turn AI ambition into practical, working reality. Our solutions are designed to integrate with your existing infrastructure, keep your data secure, and deliver results that are clear, measurable, and built for the long term. If you are ready to make AI work for your organization, let's talk.

Share this article: